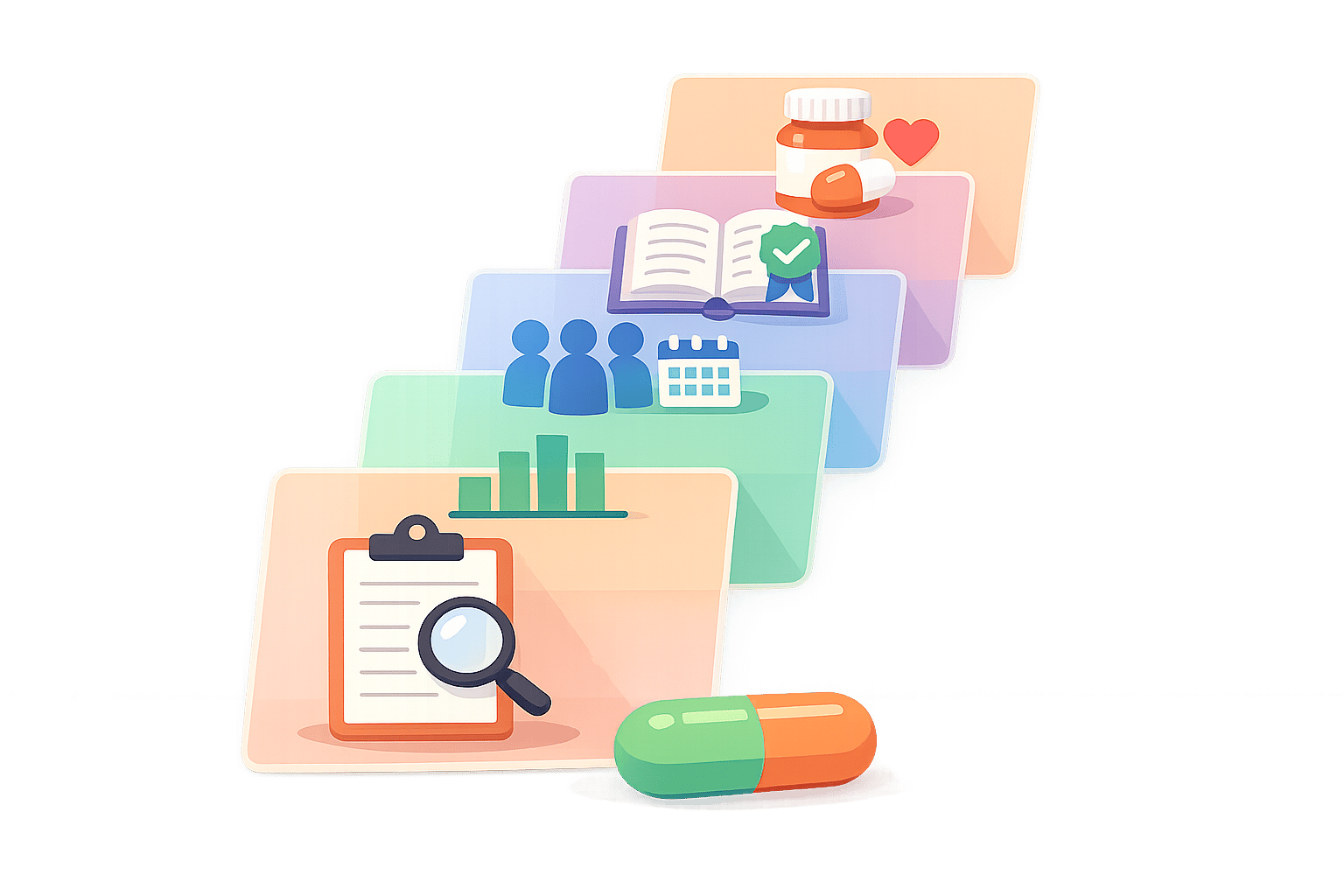

5 Steps to Read Supplement Study Results

When supplement labels claim they’re “scientifically backed,” it’s easy to be misled. Many studies are cherry-picked or based on weak evidence. To truly assess whether a supplement works, you need to evaluate the research behind it. Here’s how:

- Check Study Design: Look for randomized, double-blind, placebo-controlled trials. These ensure unbiased and reliable results.

- Assess Statistical Data: Focus on p-values (≤0.05 indicates significance) and confidence intervals. But remember, statistical significance doesn’t always mean practical impact.

- Evaluate Sample Size & Duration: Larger groups and longer trials are more reliable. Watch for high dropout rates, which can skew results.

- Verify Peer Review: Ensure the study is published in a reputable, peer-reviewed journal. Avoid predatory or non-reviewed sources.

- Compare Study to Real Use: Match the dosage, timing, and participant demographics to your situation. Results may vary based on how the supplement is used.

If this feels overwhelming, tools like SlipsHQ can simplify the process by analyzing supplement research and providing trust scores based on safety, efficacy, and quality. Always dig deeper before trusting bold claims.

5-Step Process to Evaluate Supplement Study Results

Step 1: Check if the Study Was Randomized and Controlled

When reviewing studies on supplements, it's crucial to ensure the research design is solid. The phrase "randomized, double-blind, placebo-controlled trial" is considered the gold standard in biomedical research. Here's why these elements matter:

- Randomization: This process assigns participants to either the supplement or control group purely by chance. It ensures that factors like age, diet, or exercise habits are evenly distributed. Without randomization, it's tough to determine whether the supplement itself caused the observed effects.

- Control Group: A control group, often given a placebo that looks identical to the supplement, provides a baseline for comparison. This allows researchers to isolate the supplement's true impact.

- Blinding: In double-blind studies, neither participants nor researchers know who is receiving the supplement or the placebo. This prevents unconscious biases from influencing the outcomes.

To verify these elements, dive into the "Methods" or "Materials and Methods" section of the study. While the abstract might hint at these details, the methods section offers the full picture of how participants were assigned and managed. Look for terms like "randomly assigned", "placebo", or "double-blind." If these words are missing, it’s a warning sign that the study might not be as reliable as it seems.

Also, check the results for a breakdown of baseline characteristics across groups. If one group stands out in terms of age, health, or activity level, it could mean randomization didn’t work as intended. Similarly, if one group has a high dropout rate, it might distort the findings or hide potential side effects. Once you've assessed these factors, you're ready to move on to Step 2 to analyze statistical significance.

Step 2: Look for Statistical Significance

Once you've confirmed the study's design is solid, it's time to dig into the data. Start by checking whether the results are statistically significant. Keep in mind, "significant" in this context doesn't mean "important" or "life-altering." Instead, it means the observed effect is unlikely to be due to random chance if the supplement truly had no effect.

The key number to look for is the p-value, which you'll typically find in the study's "Results" section. A p-value of 0.05 or less generally indicates statistical significance. Alongside p-values, pay attention to 95% confidence intervals (CI). If a CI includes zero (e.g., –0.19 to 0.12), the result isn't statistically significant. Understanding these metrics will help you make sense of the findings before moving on to evaluate the sample size and study duration.

It's also crucial to separate statistical significance from clinical relevance. Just because a result is statistically significant doesn't mean it has practical or meaningful implications. For instance, in large studies, even very small differences can reach statistical significance. Always take a close look at the actual size of the effect, not just whether it meets the significance threshold.

Be cautious with studies that lack clear statistical details. When a study reports "no effect", it often means no statistically significant effect was found. This doesn't rule out small changes - they might simply be too minor to distinguish from random variation. Also, watch for p-hacking, where researchers test multiple outcomes but only highlight those that meet significance criteria. Remember, larger sample sizes can make it easier to find statistically significant results, even for effects that are too small to matter in practice.

"For scientists, significant doesn't mean important - it means statistically significant. An effect is significant if the data collected over the course of the trial would be unlikely if there really was no effect."

– Examine.com

Step 3: Review Sample Size and Study Length

Once you've confirmed statistical significance, it's time to ensure the study had enough participants and ran for an appropriate duration. Why? Because larger sample sizes help smooth out random fluctuations. For instance, observing a change in 1,000 participants is far more dependable than seeing the same change in just 10 people. Without enough participants, even the best-designed study can miss real benefits - or worse, identify effects that don't actually exist.

The study's length is just as crucial, especially for supplements that promise long-term benefits. Many supplements need weeks, sometimes months, to show their full potential. Take a 12-month study on a hair growth supplement, for example - it revealed gradual improvements over time. This highlights how shorter trials might miss cumulative effects, leaving an incomplete picture.

Another factor to watch for is dropout rates in long-term studies. High dropout rates shrink the final sample size, which can undermine the reliability of the results. To evaluate this, check the study's "Results" section and compare the number of participants who finished the trial to the number who started. A review of 137 trials, for example, found a median sample size of just 68 participants, with many studies failing to explain how they determined their sample sizes.

"The larger the sample size of a study (i.e., the more participants it has), the more reliable its results."

– Examine.com

Step 4: Verify Peer-Reviewed Publication

After evaluating the study design and data, the next critical step is confirming whether the research was published in a peer-reviewed journal. Peer review is a rigorous process where journal editors send submitted papers to independent experts in the same field. These experts assess the study's quality, data accuracy, and originality before it gets published. This process is designed to catch issues like flawed methods, biased data, or unsupported conclusions that could mislead readers. Here’s how you can check if a study has undergone peer review.

"The reviewers determine if the article should be published based on the quality of the research, including the validity of the data, the conclusions the authors' draw and the originality of the research."

– LibGuides at Oregon State University

To confirm a study's peer-reviewed status, start by visiting the journal's website. Check the "Information for Authors" section - most publishers clearly outline their peer-review process there. Alternatively, search for the journal in academic databases like PubMed or Academic Search Complete, which often tag peer-reviewed articles. Another helpful tool is Ulrichsweb, a directory that identifies "refereed" journals commonly used by libraries.

Stay away from unpublished or self-published research entirely. Studies that skip peer review - such as conference abstracts, internal reports, or articles published in predatory journals - lack the quality control needed to ensure reliable results. Predatory journals, in particular, are a significant concern. They often charge authors fees while offering little to no editorial oversight, promising quick publication instead. Warning signs include journals covering unrelated fields, missing or unverifiable editorial boards, and vague contact information.

The importance of peer review becomes clear when considering that 69% to 72% of health claims in UK national newspapers rely on weak evidence. Peer review acts as a critical safeguard, filtering out unverified claims and ensuring supplement research meets rigorous scientific standards.

Step 5: Compare Study Conditions to Actual Use

This step shifts the focus from how studies are designed to how they relate to your actual supplement use. Even the most rigorous, peer-reviewed studies can lead to confusion if their protocols don't match how you take the supplement. Differences in dosage, timing, or administration methods between research settings and product labels can significantly affect outcomes.

Start by digging into the Methods section of the study. Look for details about dosage, frequency, and duration. Then, compare these to your supplement's label. Pay close attention to measurement units - there’s a big difference between 500 mg and 500 mcg. Labels often display the % Daily Value (%DV), which reflects nutrient contributions to a standard 2,000-calorie diet. However, clinical trials frequently use higher, therapeutic dosages that may not match what's on the label.

How you take the supplement matters, too. For example, fat-soluble supplements require dietary fats for proper absorption. If the study instructed participants to take the supplement with a meal but you take it on an empty stomach - or vice versa - the results might not align. Check whether the study specified taking the supplement with food, on an empty stomach, or at specific times, and then compare that to your routine.

Participant demographics also play a big role. A supplement tested on college athletes might not deliver the same results for postmenopausal women. Examine.com highlights this point:

"A compound or intervention that is useful for one person may be a waste of money - or worse, a danger - for another".

Take glutamine as an example. Early studies showed it provided significant benefits for burn victims with amino acid deficiencies. However, healthy individuals saw little to no effect. Reviewing participant details like age, sex, lifestyle, and health status in the study's Methods section can help you determine if the findings are relevant to you.

To simplify this process, tools like SlipsHQ can help you match study protocols to your supplement label, ensuring your usage aligns with research-backed recommendations. This final step wraps up the guide to understanding supplement study results.

Conclusion

You don’t need to be a scientist to make sense of supplement study results - just a clear, structured approach. A five-step framework can help you cut through marketing hype and focus on the facts: look for randomized controlled trials, check for statistical significance, assess sample sizes, confirm peer-reviewed publication, and compare study conditions to how supplements are actually used.

As Examine.com wisely states:

"Knowing how researchers arrived at a conclusion is as important as the conclusion itself".

This method ensures you're not swayed by cherry-picked data and helps you make informed choices.

Of course, manually evaluating supplements can be a slog. That’s where SlipsHQ comes in. The app simplifies the process by analyzing research through a 35-factor trust score, which considers study quality, safety warnings, ingredient purity, and even personalized health recommendations. With just a barcode scan, you can instantly access science-backed insights, saving you time and helping you build a supplement routine tailored to your needs.

SlipsHQ takes care of the heavy lifting, giving you clear, reliable information without the hassle - grounded in solid research and transparency.

FAQs

What should I look for to tell if a supplement study is trustworthy?

To gauge whether a supplement study is reliable, start by looking at the source. Was it published in a well-known, peer-reviewed journal? This can be a strong indicator of credibility. Then, dig into the study design - did it include a control group and a large enough sample size? These elements are essential for producing trustworthy results.

Another critical step is seeing if the findings have been independently verified or replicated by other researchers. Studies that stand up to repeated testing are generally more dependable.

Also, keep an eye out for conflicts of interest, like funding from supplement companies, which could influence the results. And think about whether the study's conclusions align with your personal health goals. By considering these factors, you’ll have a clearer picture of how much trust to place in the study.

Why does statistical significance matter when reviewing supplement study results?

Statistical significance is a key concept in research, especially when evaluating supplement studies. It helps researchers figure out if the results are likely due to the supplement being tested or just random chance. Essentially, it’s a way to judge whether the findings are solid enough to dismiss the idea that the supplement has no effect at all.

That said, statistically significant results don’t always translate into something that’s practically or clinically meaningful. For example, a study might show a statistically significant improvement, but if the actual effect is tiny, it might not have much impact on your health or wellness. So, while statistical significance is an important marker of study quality, it’s just as critical to look at the real-world implications - like whether the results are substantial enough to matter in everyday life.

How can I compare study conditions to my own supplement use?

When evaluating how study conditions align with your supplement usage, it's essential to focus on key aspects like dosage, duration, and the characteristics of the study participants (such as their age, health status, and lifestyle). These factors play a big role in determining how applicable the study's findings are to your situation. For example, if a study uses higher doses or takes place in a controlled setting, the results might not directly translate to everyday use.

It's equally important to consider the study's credibility. Check whether the research has been independently reviewed or certified by reputable organizations. Look out for any safety concerns mentioned, such as interactions with medications or specific health conditions, and see if any side effects were reported.

Tools like SlipsHQ can make this process easier by helping you assess product safety, ingredient quality, and overall reliability. This ensures your supplement choices are backed by evidence and safe for regular use.